Today’s blog posting highlights the latest and most recent activity with the Cimetrix Book Club. Our employees constantly strive to develop their skills, share information, and keep up to date with the industry. Part of this effort includes an employee book club that involves many of our team members each month, and from time to time we cover some of their favorites here on our blog!

Today’s blog posting highlights the latest and most recent activity with the Cimetrix Book Club. Our employees constantly strive to develop their skills, share information, and keep up to date with the industry. Part of this effort includes an employee book club that involves many of our team members each month, and from time to time we cover some of their favorites here on our blog!

Today's book is titled "The Art of Unit Testing" by Roy Osherove. The book review is by Westley Kirkham, a Quality Engineer based in Salt Lake City, UT, USA.

“The Art of Unit Testing” guides the reader step by step from writing the first simple tests to developing robust test sets that are trustworthy, maintainable and readable.

In the first section, Osherove explains what a unit test is, the properties of good unit tests, and why they are so important. The lion's share is dedicated to the nitty-gritty of writing and maintaining unit tests specifically, and testing suites generally. The first part of the section goes in depth to show how Mocks, Stubs and Isolation frameworks are used to test your code. The last section discusses how to deal with resistance to change from co-workers and management if you're trying to introduce Test-Driven Development or Agile methodologies, as well as how to deal with legacy code. Osherove also shares his insights on what tools he believes are the best aids in unit testing. ReSharper is one of his favorites, but he also reviews Nsubstitute, Moq, CodeRush and others.

One section that stood out to our team was Osherove's three pillars of a good unit test—trustworthiness, maintainability, and readability.

Trustworthy tests are up-to-date, simple and correct. There are no duplicate tests, and they do not test any old functionality or functionality that has been removed. The unit test only tests one item and doesn't conflict with other tests. The bugs the test finds are actual bugs in the code, and not bugs in the test.

Maintainable tests are flexible, and don't break with each minor change to the product. The tests are isolated. They are not over-specified and they are parameterized.

Readable tests are easy to understand and do not require the developer or tester who comes after you to spend extra time understanding what you've written. The test names are descriptive, and the asserts are meaningful. Any failures or issues caught will lead the developer in the right direction.

These three pillars should apply to all that we write, not just tests.

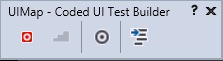

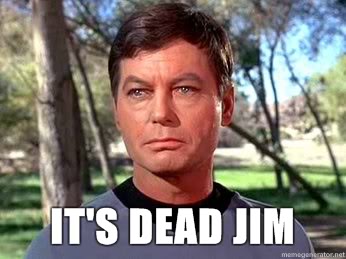

At Cimetrix, much of what Osherove teaches is already integrated into our engineering culture. As part of our implementation of Agile, developers write unit tests to verify that the functionality they have coded is correct. It is then reviewed by another developer and a member of the QE team to ensure that common use cases and important edge cases are covered and that the functionality is complete. All code must follow naming conventions and styles verified through ReSharper. For all of our products, unit tests are run on each build, and integration tests are run nightly.

Osherove's lessons on unit testing implementation, testing suite organization, and test-driven development integration are simple and practical. This book would benefit any team looking to improve the fidelity of its software products and the efficiency of its engineers.

The Cimetrix Resource Center is a great way to familiarize yourself with standards within the industry as well as find out about new and exciting technologies.

The Cimetrix Resource Center is a great way to familiarize yourself with standards within the industry as well as find out about new and exciting technologies.

This short but dense book was written to guide software developers through their journey of building user interfaces. While it was targeted specifically for web and mobile user interfaces, the general topics and suggestions presented will benefit almost anyone developing any kind of software. The general theme of the book ties directly with the title: make the end user think as little as possible while using your software.

This short but dense book was written to guide software developers through their journey of building user interfaces. While it was targeted specifically for web and mobile user interfaces, the general topics and suggestions presented will benefit almost anyone developing any kind of software. The general theme of the book ties directly with the title: make the end user think as little as possible while using your software. This book was designed to be an overview of the programming language, C# and cover the breadth of most topics while delving in depth on some of the topics. It was designed to be helpful for even the most novice developers while still being useful to advanced programmers looking to sharpen their craft. This was perfect for our group because we had a mix of aspiring developers (or developers who hadn’t spent much time programming yet), experienced developers who were new to C#, and experienced developers just looking to get better and learn new things about the language and about best practices.

This book was designed to be an overview of the programming language, C# and cover the breadth of most topics while delving in depth on some of the topics. It was designed to be helpful for even the most novice developers while still being useful to advanced programmers looking to sharpen their craft. This was perfect for our group because we had a mix of aspiring developers (or developers who hadn’t spent much time programming yet), experienced developers who were new to C#, and experienced developers just looking to get better and learn new things about the language and about best practices.